When searching for data, we often rely on straightforward criteria like exact matches or proximity in attributes such as location. These types of matches are easy to calculate, as they involve direct comparisons. However, there’s a more complex challenge that arises when we need to determine if two things are similar in meaning. How can we quantify and calculate the similarity between entities based on their semantic content?

Consider a scenario where you want to search for “ingredients like broccoli” in a vast dataset of recipes. How do you go about this task without manually creating exhaustive tables of predetermined similar ingredients? And what if a user wants to explore “ingredients similar to my personal taste profile”? Attempting to precompute such intricate semantic relationships, even with human assistance, can quickly become a daunting and impractical endeavor.

Fortunately, there’s a solution to this conundrum, and it’s a fundamental concept in the field of natural language processing (NLP): Semantic Similarity.

OK, but what is Semantic Similarity?

Semantic similarity is the measure of how closely related or alike two pieces of text or data are in terms of their meaning. In essence, it allows us to quantify the similarity in meaning between words, phrases, or even entire documents. This powerful concept forms the bedrock of many NLP applications, enabling us to perform tasks that go beyond simple string matching.

So, how does semantic similarity work? At its core, it relies on the idea that words and phrases with similar meanings should be close to each other in some kind of mathematical space. This space is often represented as an embedding, where words or concepts are mapped to vectors in a high-dimensional space. These embeddings are learned from large corpora of text data using advanced machine learning models like Large Language Models (LLMs).

LLM Embeddings and Semantic Similarity

One of the groundbreaking developments in recent years has been the advent of LLMs like GPT-3.5 (you may know it via ChatGPT), Vicuna, and others. These models have the ability to generate highly context-aware word embeddings that capture rich semantic information. They can represent words, phrases, and even entire sentences in a way that reflects their semantic relationships.

To calculate semantic similarity using LLM embeddings, we can employ various techniques such as cosine similarity or Euclidean distance between the vectors that represent the entities in question. The closer these vectors are in the high-dimensional space, the more similar the meanings of the corresponding words or phrases.

Given enough data points, we are able to extract some really useful information based on what values are grouped together. Similar data will form clouds or groups in our maths space, so nearby ideas are worth looking at in the search for similarity.

Distance, Dimensions, Data

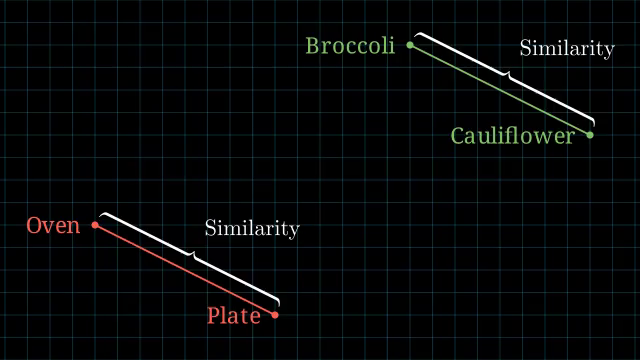

Let’s break that down a little bit and avoid the math part for a second — just assume that we know there is some math function that given two data points, we can tell how close together or far apart they are. (Like drawing a line between two dots, you can tell how long that line is by measuring it.) We’ll call this the “ruler”.

We’ll start with a very simple example. Four words: Broccoli, Cauliflower, Oven, and Plate. You probably already have a mental feeling for how these relate to each other. That feeling is what we’re exploring and codifying.

From here, let’s try to visualize what that relationship might look like. We’ll move the words to different parts of the graph, using a dots to give each an exact location. We’ll group broccoli and cauliflower together as both being delicious vegetables, while we’ll group oven and plate together as being implements in the kitchen. The distance between our dots, then, becomes how similar these words/ideas are to each other – our ruler in action.

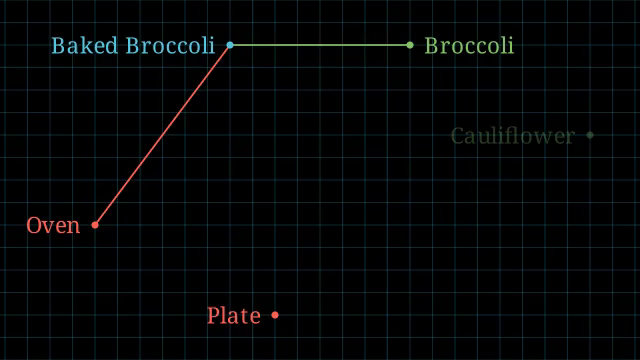

Next, let’s introduce the idea of Baked Broccoli. If you were to give that a location on the graph, where might you put it? Here’s how I would arrange it:

Baked Broccoli is a kind of Broccoli so it’s close to that, but you often bake things in the Oven. As such, I want to edge it closer to that word as well. Therefore, Baked Broccoli gets to live in a cozy spot between both. Just a bit closer to Broccoli, though, since you can bake foods in other ways – like on your dashboard in the hot sun during the summer. (Chom and I don’t recommend that approach, though.)

By building up a large graph of these different nodes with some given meaning, we can get a good idea of what’s related to what. Since we would eventually run out of space to separate things that are unrelated, most LLMs use a much, much larger embedding space – thousands of dimensions, instead of just the 2D world we’re using for our examples. That way, there will always be a spot to squeeze in one more word and not have it accidentally too close to another word it doesn’t relate to.

In addition, in our 2D example, notice that Broccoli and Baked Broccoli are both still about the same distance from Plate as a side effect. They both go on plates when served as a meal, after all. Yet again, our ruler is doing its job! It is these kinds of discoveries through distance groupings that let us make use of embeddings to find semantic similarity, even for words we didn’t have in our initial training data.

Applications of Semantic Similarity

Semantic similarity has a wide range of applications across industries and domains:

- Information Retrieval: It powers search engines to provide more accurate and relevant results by understanding the intent behind a query.

Example: Google can trim out discussion about growing broccoli when you’re searching for cooking broccoli, and likewise if you are looking for articles about cooking food, you won’t get articles about cooking other things — like cooking the books. - Recommendation Systems: Semantic similarity helps recommend products, movies, or content by identifying items that are contextually similar to the user’s preferences.

Example: Like broccoli? Here’s cauliflower, brussel sprouts, and green beans. Semantics doesn’t just have to be a word’s meaning – it could also be any other measurable quantity with meaning, like flavor, smell, color, and so on. Surprisingly, when you scan huge amounts of human writing, those hidden meanings end up in the embeddings of an LLM by incidence! I guess humans very much like to talk about things like how broccoli and cauliflower both look like different colored chef hats? - Content Summarization: It aids in generating concise and meaningful summaries of long texts by identifying the most semantically important content.

Example: Don’t have time to read the full Rolling Stone article? Here’s a one paragraph summary — all thanks to finding a smaller group of words that matches the same longer article in meaning via semantic similarities of the constituent words. - Machine Translation: Semantic similarity assists in improving the quality of machine translation by aligning words and phrases with their closest counterparts in the target language.

Example: We create a similar semantic mapping like we did for broccoli etc above, but this time we group words by how closely they are in meaning to each other. Ordinateur and Computer, Cagna and Dog, Taco and… Taco… - Content Classification: It’s used for categorizing and organizing text data by similarity, enabling better content management.

Example: Automatically have your blog posts organized into their appropriate labels – #game-dev #artificial-intelligence #cooking . - Sentiment Analysis: Semantic similarity can be used to gauge the similarity in sentiment between different pieces of text, helping companies understand public opinion.

Example: Know if those comments on your blog post are angry or not (and tally the amount) without having to wade through and read every one — nobody wants to do that.

Summing it up, semantic similarity is the wizardry in the world of natural language processing (NLP), transforming how computers can figure out human lingo. By playing around with LLM embeddings and some slick algorithms, we’re teaching machines to suss out meanings and connections in our writing. This magic is behind making our searches more than just keyword bingo, and recommendations more like mind-reading. As we keep pushing the boundaries in NLP, we’re getting closer to having our gadgets not just hear, but really listen and understand us — to be able to put our organic brains together with their bionic ones for something greater than the sum of its parts!

Next up…

I want to dive in to a little more maths about the embeddings and also talk about how we actually gather training data. My simplified example in this article leaned heavily on the human intuition of understanding, but didn’t really touch on how we actually get the computer to share that intuition.

Likewise, I want to give a visual demonstration of a real world example, because there’s magic afoot that’s worth witnessing!